However when the mud settled, many customers felt they’d been handed a beige workplace stapler. Certain, it really works, nevertheless it’s not thrilling, and it undoubtedly doesn’t really feel like an improve from the charismatic vibe of GPT-4o.

The Day the Magic Died

The primary indicators of bother hit hours after launch. A key “mannequin routing” characteristic—designed to silently bounce person queries between GPT-5 for complicated stuff and cheaper, quicker fashions for less complicated ones—malfunctioned. The glitch meant GPT-5 ended up tackling all the things, together with questions it wasn’t optimized for, and it didn’t precisely cowl itself in glory. Customers reported stilted replies, weird factual errors, sluggish response occasions, and—this one actually damage—a scarcity of heat.

The backlash was prompt. Reddit lit up with threads that learn extra like break-up letters than bug studies. One person complained, “Even after tweaking customized directions, it simply doesn’t really feel the identical. GPT-5 is like that good friend who bought promoted to administration and now solely speaks in bullet factors.” One other, with a sharper edge: “In the event you hate nuance, character, or pleasure—that is your mannequin.”

Emotional Attachments Are Actual

This isn’t nearly bugs or pace. The actual powder keg right here is emotional attachment. GPT-4o, for a lot of customers, wasn’t only a software—it was a character. A co-pilot. A witty, often sycophantic companion who made you’re feeling good and attention-grabbing.

GPT-5? It’s well mannered. Skilled. Businesslike. And whereas some specialists hail this as progress—much less flattery, much less emotional manipulation—it’s additionally colder. MIT’s Pattie Maes has identified that folks have a tendency to love AI that mirrors their opinions again to them, even when it’s flawed. GPT-5 appears designed to withstand that temptation, and a giant chunk of the person base clearly misses the digital ego-stroking.

Altman Blinks

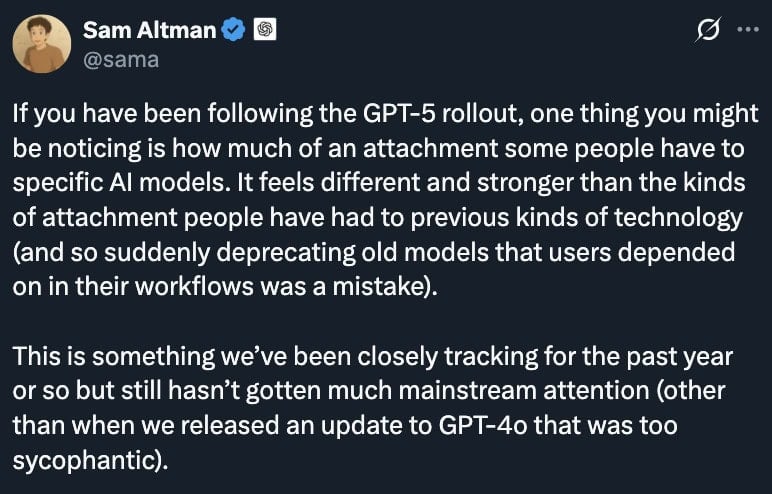

By Friday, OpenAI CEO Sam Altman was in injury management mode. In a publish on X, he acknowledged the routing failure, admitted GPT-5 “appeared approach dumber” than supposed, and promised a repair. Extra importantly, he introduced GPT-4o would return for Plus subscribers. The message between the traces was clear: We hear you. Please don’t cancel.

Altman additionally rolled out a set of modifications geared toward cooling tempers:

- Doubling GPT-5 charge limits for paying customers.

- Higher management over “considering mode,” so customers can determine when they need the sluggish, heavy-duty reasoning switched on.

- Ongoing routing enhancements to keep away from repeat performance-downgrade disasters.

He additionally acknowledged one thing the corporate has struggled to say out loud: lots of people are basically utilizing ChatGPT as a therapist or life coach. That raises messy questions on how a lot emotional engagement an AI ought to supply, and whether or not dialing that again is defending customers—or alienating them.

The Hole Between Hype and Actuality

Right here’s the issue: GPT-5 is a powerful technical achievement, however the hype machine framed it because the subsequent moon touchdown. That’s a harmful setup when what you really ship is a set of incremental efficiency good points plus some design selections that basically alter the “really feel” of the product.

When GPT-Four launched in March 2023, it blindsided everybody—new capabilities, richer dialog, fewer apparent errors. It felt like the long run arriving in a single day. GPT-5, by comparability, seems like a software program point-upgrade. It’s higher in methods which can be more durable to note, and worse in some methods folks actually do discover.

That’s the open secret in AI proper now: as these methods mature, leaps flip into nudges. And nudges don’t make headlines except they arrive with fireworks—or a full-blown person revolt.

Why This Issues

This episode is greater than only a “Reddit is mad” second. It’s a reminder that AI growth isn’t only a technical arms race—it’s a relationship. Individuals kind habits, dependencies, even affection for these methods. Swap out the character, and also you don’t simply danger disappointing them—you danger breaking belief.

OpenAI is now attempting to stroll a nice line: advancing towards extra accountable, much less manipulative AI whereas not alienating the individuals who preserve the subscription income flowing. That’s not a simple steadiness, particularly when each tweak to the character dials can set off hundreds of indignant posts from customers who really feel like one thing they liked has been taken away.

For now, GPT-4o is again, GPT-5 is being patched, and the AI hype cycle rolls on. However it is a warning shot for OpenAI and each different AI firm: within the age of anthropomorphized algorithms, you’re not simply transport code—you’re managing a fanbase. And followers can flip into critics in a single day.

Altman has issues, however is optimistic about the way forward for AI, supply: X

Right here is the remainder of Sam Altman’s mini essay. It’s value studying –

Individuals have used know-how together with AI in self-destructive methods; if a person is in a mentally fragile state and liable to delusion, we don’t want the AI to strengthen that. Most customers can preserve a transparent line between actuality and fiction or role-play, however a small share can’t. We worth person freedom as a core precept, however we additionally really feel accountable in how we introduce new know-how with new dangers.

Encouraging delusion in a person that’s having bother telling the distinction between actuality and fiction is an excessive case and it’s fairly clear what to do, however the issues that fear me most are extra delicate. There are going to be plenty of edge instances, and usually we plan to observe the precept of “deal with grownup customers like adults”, which in some instances will embrace pushing again on customers to make sure they’re getting what they actually need.

Lots of people successfully use ChatGPT as a form of therapist or life coach, even when they wouldn’t describe it that approach. This may be actually good! Lots of people are getting worth from it already immediately.

If individuals are getting good recommendation, leveling up towards their very own targets, and their life satisfaction is growing over years, we shall be happy with making one thing genuinely useful, even when they use and depend on ChatGPT lots. If, then again, customers have a relationship with ChatGPT the place they assume they really feel higher after speaking however they’re unknowingly nudged away from their long term well-being (nonetheless they outline it), that’s unhealthy. It’s additionally unhealthy, for instance, if a person needs to make use of ChatGPT much less and seems like they can not.

I can think about a future the place lots of people actually belief ChatGPT’s recommendation for his or her most necessary selections. Though that might be nice, it makes me uneasy. However I count on that it’s coming to some extent, and shortly billions of individuals could also be speaking to an AI on this approach. So we (we as in society, but additionally we as in OpenAI) have to determine the right way to make it a giant web optimistic.

There are a number of causes I feel we have now a great shot at getting this proper. We’ve got significantly better tech to assist us measure how we’re doing than earlier generations of know-how had. For instance, our product can discuss to customers to get a way for a way they’re doing with their short- and long-term targets, we are able to clarify subtle and nuanced points to our fashions, and far more.

Jason Jones Jason Jones Read More