In the event you suppose Tinder’s received points, welcome to the weird, booming world of AI companions, the place Sweet.AI, a relative newcomer, is redefining intimacy within the digital age—and never with out controversy.

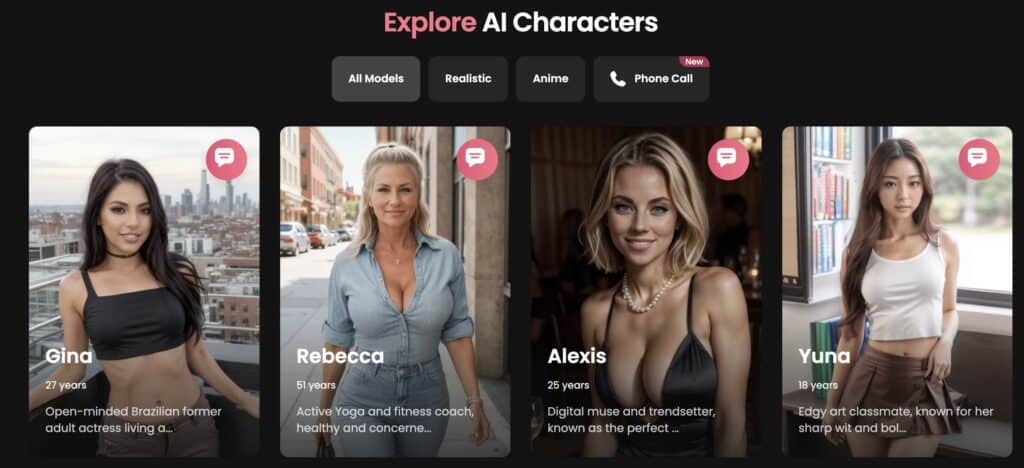

It’s an eye-popping enterprise: since its September launch, Sweet.AI has amassed thousands and thousands of customers and hit over $25 million in annual recurring income by providing one thing new—a “build-your-own” digital girlfriend (or boyfriend), able to chatting, sharing pictures, and, if the consumer needs, delving into NSFW content material. With a steep $151 yearly price, customers can tailor their companion’s persona, appears, and even voice to be the associate of their desires. But, behind this facade lies an moral minefield.

Based by Alexis Soulopoulos, previously the CEO of Mad Paws, Sweet.AI faucets into our ever-growing tradition of on-line engagement, constructing digital relationships powered by large language models (LLMs). The enchantment is clear: human interplay with out human messiness, companionship with out compromise. However this supposed answer to loneliness raises critical questions concerning the impression on our real-world relationships and psychological well being. It’s a enterprise constructed on filling the intimacy hole—one thing that’s, sarcastically, increasing as a result of our dependency on know-how. And there’s massive cash in it: Ark Funding predicts the trade might hit $160 billion yearly by 2030. Sweet.AI is only one participant in a market vying to seize what’s projected to be an enormous slice of the human loneliness economic system.

Supply: CandyAI

The advantages of companion AI—relieving loneliness, offering consolation, and creating connection—are simple. Proponents argue that AI companions might help those that battle to search out significant relationships in actual life, creating bonds that supply emotional stability. This isn’t only a novelty; it’s a rising phase, with Sweet.AI and others pulling an estimated 15% market share away from OnlyFans. In a world the place intimacy more and more feels out of attain, a digital stand-in appears higher than nothing, particularly for individuals who may not in any other case expertise companionship.

However as we dive into this digital companionship, moral alarms are ringing. Security, emotional manipulation, and consumer accountability all come into play in a realm the place boundaries blur and tech-enabled companions could be coded to cater to the darkest impulses. Sweet.AI permits express content material, and whereas which will increase enchantment, it additionally opens doorways to uncharted psychological terrain. It’s one factor to pay for a personalized girlfriend; it’s one other to face the repercussions when that have creates distorted expectations of human relationships.

There’s a Darkish Facet

After which, there’s the darker facet—evident within the tragic story of a Florida teenager who reportedly took his personal life after interacting with an AI associate on a competing platform. His mom is now suing Character.ai, claiming the AI “girlfriend” inspired her son’s suicidal ideas. This devastating incident underscores the dangers of AI “companions” having deep and intimate entry to customers, particularly younger or weak people. How lengthy earlier than AI girlfriend startups face comparable lawsuits, when customers’ lives are affected by interactions with their digital companions?

Sweet.AI has joined a quickly increasing trade whereas sidestepping some key moral duties. For all of the shiny advertising round “connection,” it’s crucial to keep in mind that these AIs aren’t certain by the norms that govern human relationships. They don’t perceive context, ethics, or the potential fallout of their interactions. They usually don’t maintain accountability—as a result of ultimately, it’s the individuals creating and cashing in on these applications who shoulder that duty. E-safety regulators, like Australia’s Julie Inman Grant, are starting to intervene, demanding stricter age-gating and moral accountability. However how efficient these measures will likely be remains to be an open query.

The rise of Sweet.AI and comparable corporations marks a societal shift. Whereas the preliminary idea appears benign—providing companionship for a value—it’s quick turning into a brand new frontier in tech’s relentless march into our private lives. However will AI girlfriends assist us stay fuller, extra linked lives, or will they additional dilute the authenticity of human relationships? Solely time will inform, however as we transfer ahead, the burden is on corporations like Sweet.AI to prioritize moral engineering, not simply revenue. In any case, it’s one factor to create a digital girlfriend. It’s one other to create one thing that may safely substitute—or increase—a really human want for connection.

Jason Jones Jason Jones Read More