On June 4, followers of the Houston Museum of Pure Science (HMNS) witnessed one thing that upset standard knowledge about social media safety. The museum’s verified Instagram account—full with its checkmark and institutional credibility—was hijacked and used to advertise a pretend Bitcoin giveaway that includes deepfake movies of Elon Musk promising $25,000 in BTC.

Inside hours, the museum regained management and deleted the posts. However the injury uncovered a harsh actuality: every thing we’ve been instructed about defending ourselves from crypto scams is changing into rapidly outdated.

The Uncomfortable Reality About “Greatest Practices”

For years, safety specialists have preached the identical mantras: “Solely observe verified accounts,” “Belief established establishments,” and “Be skeptical of get-rich-quick schemes.” And it’s sound recommendation for issues just like the cloud mining and dating scams we’re all conversant in. Immediately, nevertheless, the HMNS incident proves these tips aren’t simply inadequate—they’re truly making a false sense of safety that scammers are exploiting with surgical precision.

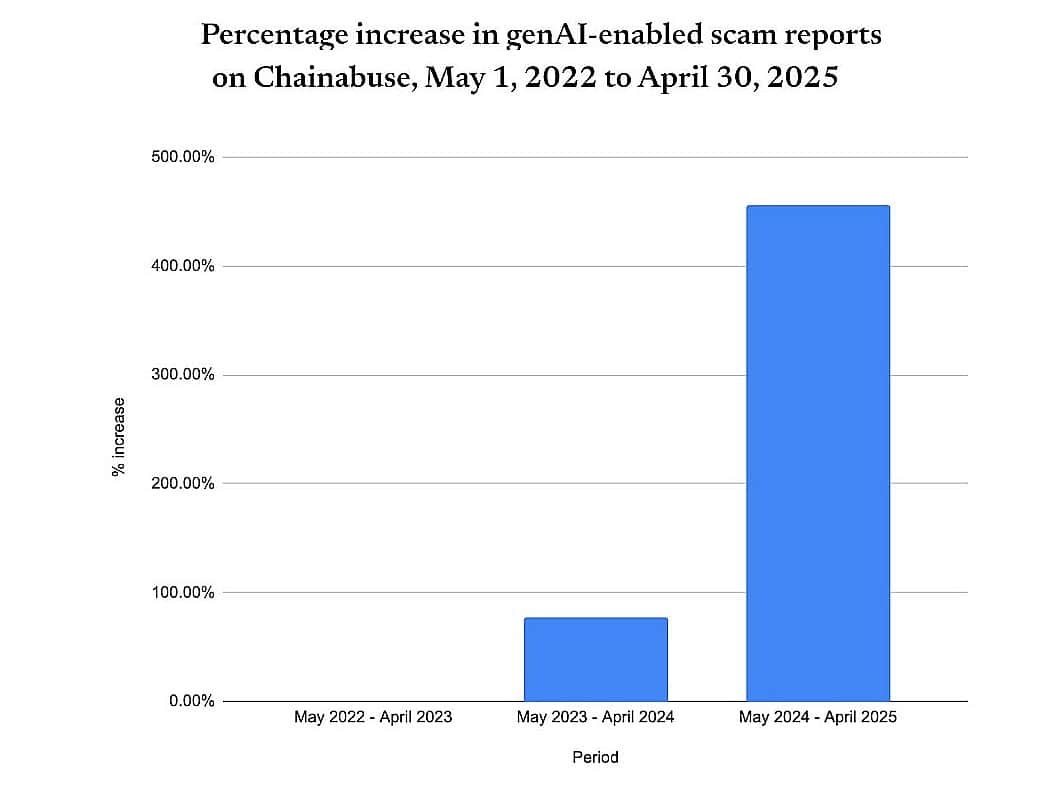

In accordance with Chainabuse, reports of scams using generative synthetic intelligence rose by 456% from Might 2024 to April 2025 in comparison with the earlier yr. The yr earlier than already confirmed a 78% spike. This isn’t simply progress—it’s exponential acceleration that conventional safety recommendation by no means anticipated.

Supply: TRM Labs

The issue isn’t that individuals are ignoring safety recommendation. The issue is that the recommendation itself was constructed for a world that now not exists.

Why This Drawback Is Worse Than Social Platforms Admit

Social media platforms have a vested curiosity in downplaying the severity of AI-powered scams. Each admission of vulnerability threatens consumer confidence and, extra importantly, advertiser confidence. However the numbers inform a special story than platform PR departments need you to listen to.

Current assaults reveals a sophistication and scope platforms gained’t acknowledge. In January 2025, each NBA and NASCAR league accounts have been compromised concurrently, pushing pretend “$NBA coin” and “$NASCAR coin” promotions. This wasn’t random—it was coordinated. The identical month, the UFC’s official Instagram account fell sufferer to a fraudulent crypto marketing campaign tagged “#UnleashTheFight.”

What platforms gained’t inform you is that these assaults succeeded not regardless of their safety measures, however as a result of their safety measures are essentially mismatched towards AI-powered threats. Conventional account safety was designed to cease human hackers utilizing human strategies. AI-powered assaults function at machine pace with machine precision, exploiting vulnerabilities quicker than human-designed methods can reply. Meta, X, and different platforms stay intentionally reactive as a result of proactive measures would require admitting the true scale of the issue—and the substantial infrastructure funding wanted to deal with it.

Right here’s what safety specialists gained’t inform you: social media platforms have financial incentives that immediately battle with rip-off prevention. Engagement-driven algorithms amplify controversial content material, together with rip-off content material that generates feedback, shares, and reactions. The identical methods that make platforms worthwhile make them susceptible to exploitation. Efficient rip-off prevention would require platforms to sacrifice some engagement for safety—a trade-off that shareholders gained’t settle for with out regulatory stress.

The Actual Cause Museums and Sports activities Groups Are Prime Targets (It’s Not What You Assume)

Typical knowledge suggests hackers goal these accounts for his or her follower counts and credibility. However this misses the deeper, extra troubling cause: these establishments characterize human curation at its most trusted stage.

When a crypto promotion seems on a museum’s account, followers don’t simply see an endorsement—they see what seems to be institutional vetting. Museums, sports activities groups, and cultural establishments occupy a novel psychological house the place audiences moderately assume content material has been reviewed by accountable human gatekeepers.

This isn’t about reaching massive audiences. It’s about exploiting belief architectures that took a long time to construct. A crypto rip-off on a random influencer’s account faces pure skepticism. The identical rip-off on the Vancouver Canucks’ account (hijacked on May 5th) or a NASCAR account bypasses that skepticism completely. The choice of these targets reveals refined psychological profiling that conventional safety frameworks by no means thought of. Scammers aren’t simply stealing accounts—they’re hijacking a long time of institutional trust-building.

Why Present Options Received’t Work

The crypto trade’s response to AI-powered scams follows a predictable sample: extra training, higher verification, stronger passwords. This method essentially misunderstands the issue.

Present options assume the vulnerability lies with customers who want higher coaching or platforms that want higher detection. Current hacks at Coinbase, Bitopro and Cetus have all seen the platform observe up with the identical drained system of press releases about ‘enhanced’ safety precautions going ahead. It’s all the time been ‘too little, too late’ however these days the felony’s toolkit has expanded exponentially. AI-powered scams are exploiting one thing deeper—the cognitive shortcuts that make digital communication attainable within the first place.

If you see content material from a verified account you belief, your mind engages in what psychologists name “cognitive offloading”—delegating verification to the platform and establishment. This isn’t a bug in human cognition; it’s a characteristic that permits us to course of hundreds of digital interactions day by day with out exhausting our psychological sources.

Conventional safety recommendation asks folks to disable this cognitive structure completely—to deal with each interplay with most skepticism. This isn’t simply impractical; it’s psychologically unattainable at scale, however for now, it truly is all we’ve received.

The Authentication Theater Drawback

Two-factor authentication, account verification, and platform badges characterize what safety researchers more and more name “authentication theater”—seen safety measures that present psychological consolation whereas providing minimal safety towards refined assaults.

The HMNS incident demonstrated how verification badges can truly improve vulnerability. They didn’t simply fail to forestall the assault—it amplified the assault’s effectiveness by lending institutional credibility to fraudulent content material. This creates a paradox that conventional safety recommendation can’t resolve: the identical mechanisms designed to determine belief turn out to be weapons for exploiting it.

What Conventional Recommendation Will get Unsuitable About AI

Commonplace crypto safety recommendation treats AI as merely a extra refined model of present threats. This essentially misjudges the qualitative shift AI represents.

Earlier crypto scams required human creativity, handbook social engineering, and time-intensive content material creation. AI-powered scams function repeatedly, testing hundreds of variations concurrently, studying from every interplay, and evolving in real-time. When conventional recommendation suggests “being skeptical of too-good-to-be-true presents,” it assumes static rip-off ways. However AI-powered scams dynamically alter their guarantees, timing, and presentation based mostly on the right track response patterns. The “too-good-to-be-true” threshold turns into a shifting goal that adapts quicker than human consciousness can observe.

The Deepfake Dilemma

Deepfake know-how has reached a sophistication stage the place conventional “crimson flags” now not apply. The Elon Musk deepfakes utilized in latest scams aren’t simply convincing—they’re indistinguishable from genuine content material to most viewers. The crypto trade’s response—asking customers to turn out to be beginner forensic analysts—shifts accountability in a manner that’s each unfair and ineffective.

Conclusion

The failure of conventional crypto safety recommendation isn’t unintentional—it’s structural. These approaches emerged when crypto scams have been human-scale issues requiring human-scale options. AI-powered scams characterize a class change that requires completely new frameworks.

Efficient safety towards AI-powered crypto scams requires acknowledging uncomfortable truths concerning the limitations of particular person vigilance, the inadequacy of present platform safety, and the necessity for systemic relatively than behavioral options.

The Houston Museum of Pure Science incident wasn’t simply one other information level in rising crypto rip-off statistics. It was a preview of a future the place conventional safety recommendation doesn’t simply fail—it creates the very vulnerabilities it claims to forestall. Till we’re trustworthy about these realities, we’ll proceed combating tomorrow’s threats with yesterday’s instruments.

Aditya Das Aditya Das Read More